Sonification is the design of sounds to provide useful information. Dataforms are physical objects constructed from digital datasets. Can we combine these ideas to create dataforms with acoustic properties that provide useful information? We will refer to this combination as an Acoustic Sonification.

This paper explores and develops the idea of acoustic sonification through a series of experiments that map a head-related transfer function (HRTF) dataset consisting of 100K data points measured from a Kemar dummy onto the shape of a bell constructed in three-dimensional CAD software and then digitally fabricated in stainless steel. The tones produced from the Left and Right HRTF bells are compared against each other and with a Null bell. The pitch and timbre of the Left and Right bells are perceptibly different from each other, and from the Null template bell.

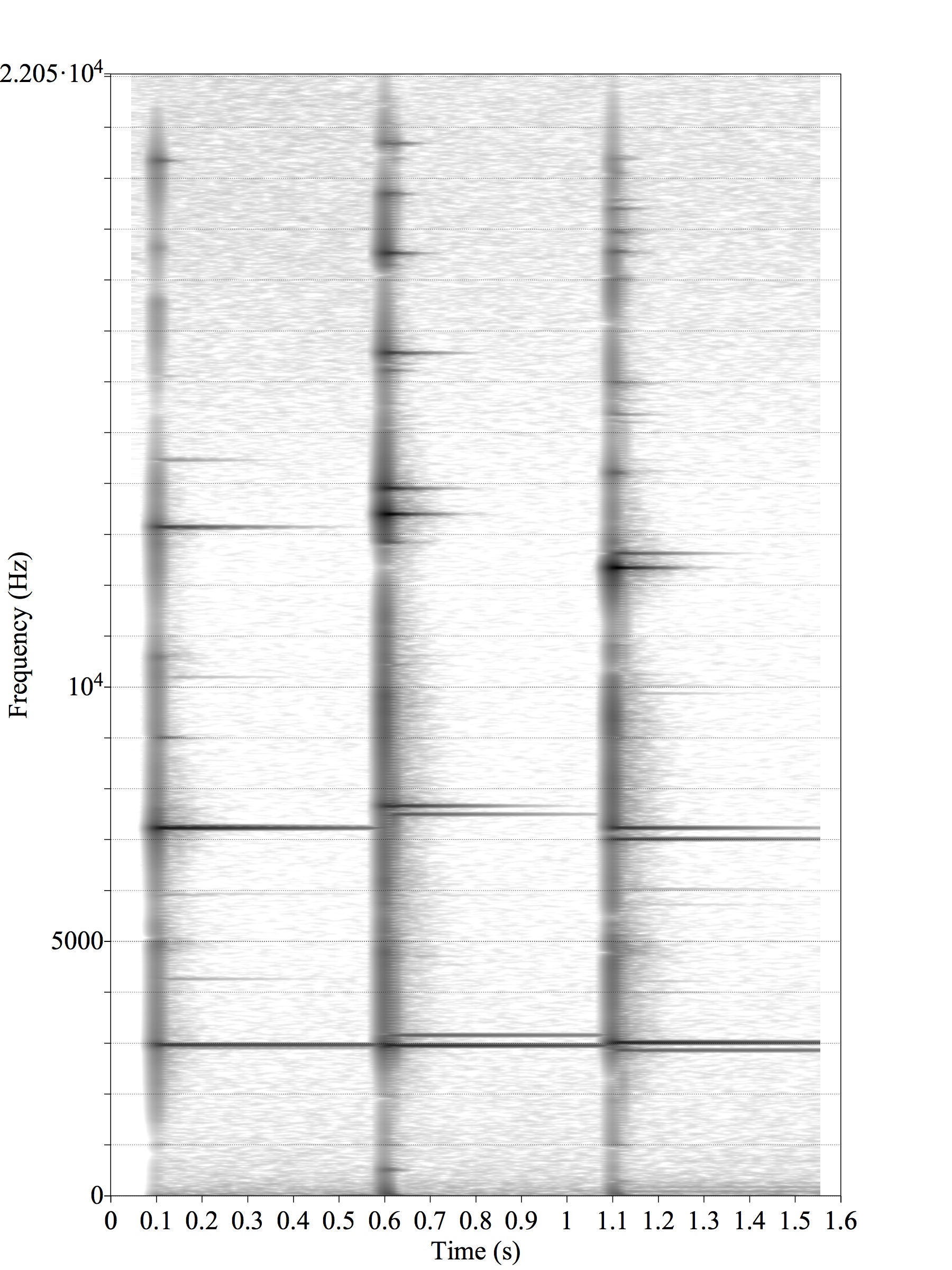

The spectra of the HRTF Bells have a double harmonic series that distinguishes them from the Null. These results suggest that the HRTF bells could be used to compare and classify HRTF datasets that are difficult to distinguish visually. This supports the hypothesis that Acoustic Sonifications could provide useful information about a general range of datasets, by producing the sound of an entire dataset of 100K points immediately using acoustics without the computational delays of digital synthesis methods or the need for electricity.